| Scroll export button | ||||||||

|---|---|---|---|---|---|---|---|---|

|

| Info |

|---|

This Guide describes how to integrate ChatGPT with Wildix. Created: February 2023 Permalink: https://wildix.atlassian.net/wiki/x/AQBxC |

| Table of Contents |

|---|

Introduction

...

- Chatbot integrated in WebRTC Kite Chatbot - you can use it a personal assistance in Collaboration/ x-bees or use it as a Kite chatbot for external users

- Speech To Text (STT) integration

Related sources:

- Wildix licensing

- For the primary settings of Kite Chatbot, consult Wildix WebRTC Kite Admin Guide

- Wildix Business Intelligence - Artificial Intelligence services

- OpenAI API documentation: https://platform.openai.com/docs/introduction

- To learn more about ChatGPT or the Kite Chatbot, you can watch the Wildix Tech Talk: Maximizing Efficiency with ChatGPT Webinar

...

- Download the archive: https://drive.google.com/file/d/1QM3nW0e2Wuo7FD2Gs168loOAMa8s47Dn/view?usp=share_link

- Open the archive, navigate to /kite-xmpp-bot-master/app and open config.js with an editor of your choice

Replace the following values with your own:

- domain: 'XXXXXX.wildixin.com' - domain name of the PBX

- service: 'xmpps://XXXXXX.wildixin.com:443', - domain name of the PBX

- username: 'XXXX', - Kitebot user extension number, do not change it

- password: 'XXXXXXXXXXXX' - Kitebot user password

- authorization: 'Bearer sk-XXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXX', - ChatGPT authorization token

- organization: 'org-XXXXXXXXXXXXXXXXXXXXXXXXX', – ChatGPT organization ID

- model: 'text-davinci-003', - this parameter specifies which GPT model to use for generating text. In this case, the model is set to 'text-davinci-003', which refers to the most advanced and powerful model available in OpenAI's GPT series of models

- temperature: 0.1, - this parameter controls the "creativity" of the generated text by specifying how much randomness should be introduced into the model's output

- externalmaxtokens: 250, - response token limit for Kite users contacting the chatbot

- internalmaxtokens: 500 - response token limit for internal users contacting the chatbot

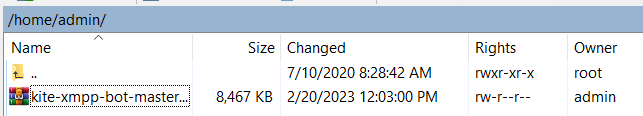

- Upload the archive to the /home/admin/ directory using WinSCP or any alternative SFTP client

| - Connect to the web terminal. Log in as the super user via the su command, password wildix

Install nodejs:

Code Block apt-get install nodejs

Unzip the archive:

Code Block unzip /home/admin/kite-xmpp-bot-master.zip

Copy the chatbot folder to /mnt/backups:

Code Block cp -r ./kite-xmpp-bot-master /mnt/backups/

Move the chatbot.service.txt file to the appropriate directory and enable chatbot as a service to run in the background, then start the service:

Code Block cp /mnt/backups/kite-xmpp-bot-master/chatbot.service.txt /etc/systemd/system/chatbot.service systemctl enable chatbot.service systemctl daemon-reload systemctl start chatbot.service

Verify that the chatbot is running by either running the ps command:

Code Block ps aux | grep node

Or simply send the bot a message

...

- Set STT & TTS language

- Set DIAL_OPTIONS -> g to continue Dialplan execution after the Speech to text application, this allows looping the Dialplan to potentially answer multiple questions

- Speech to text application that plays the voice prompt and captures the user’s response converting it to text. An in-depth guide for the Speech to Text dialplan application: https://wildix.atlassian.net/wiki/spaces/DOC/pages/30281834/Dialplan+applications+-+Admin+Guide#Dialplanapplications-AdminGuide-SpeechtoTextSTT Dialplan application: Dialplan applications Admin Guide

- NoOp(${RECOGNITION_RESULT}) - a debug application that shows the result of speech recognition, can be safely removed

- Set(RECOGNITION_RESULT=${SHELL(echo "${RECOGNITION_RESULT}" | tr -d \'):0:-1}) - this sets the RECOGNITION_RESULT variable to the value of the RECOGNITION_RESULT channel variable, with any single quotes removed. The :0:-1 substring removes the newline character

- ExecIf($[ "${SHELL(echo '${RECOGNITION_RESULT}' | grep -i 'price'):0:-1}"!="" ]?Set(PROMPT_ENGINEERING=Only respond with price in numerical format.)) - this checks if the RECOGNITION_RESULT variable contains the word "price" (case-insensitive), and if so, sets the PROMPT_ENGINEERING variable to the given string. This is a simple example of conditional prompt engineering that can be used with this setup

- Set(chatGPT=${SHELL(curl https://api.openai.com/v1/completions -H "Content-Type: application/json" -H "Authorization: Bearer sk-XXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXX" -d '{"model": "text-davinci-003", "prompt": "${RECOGNITION_RESULT}. ${PROMPT_ENGINEERING}", "temperature": 0.5, "max_tokens": 500}'):0:-1}) - this sends an HTTP request to the OpenAI ChatGPT API to generate a response based on the RECOGNITION_RESULT and PROMPT_ENGINEERING variables. The resulting JSON string is assigned to the ChatGPT variable. text-davinci-003 is the model name, you can learn more about ChatGPT models by watching the dedicated Tech talk webinar or in the OpenAI documentation

- Set(PrettyChatGPT=${JSONPRETTY(chatGPT)}) - this formats the ChatGPT variable as pretty-printed JSON and assigns the result to the PrettyChatGPT variable

- Set(result=${SHELL(echo "${PrettyChatGPT}" | grep -oP '(?<=nn).*'):0:-2}) -this extracts the response text from the PrettyChatGPT variable by using grep to search for the text following the nn field in the JSON output

- Play sound -> ${result}. End of response. - this uses the text in the result variable to generate text to speech message and play back the response from chatGPT API to the caller, appending “End of response” to mark the end of response, which is something you can change to your preference

...